What This Audit Covers

You do not need to know what a RAG pipeline is. You do not need to understand cosine similarity or token budgets or heading hierarchy semantics.

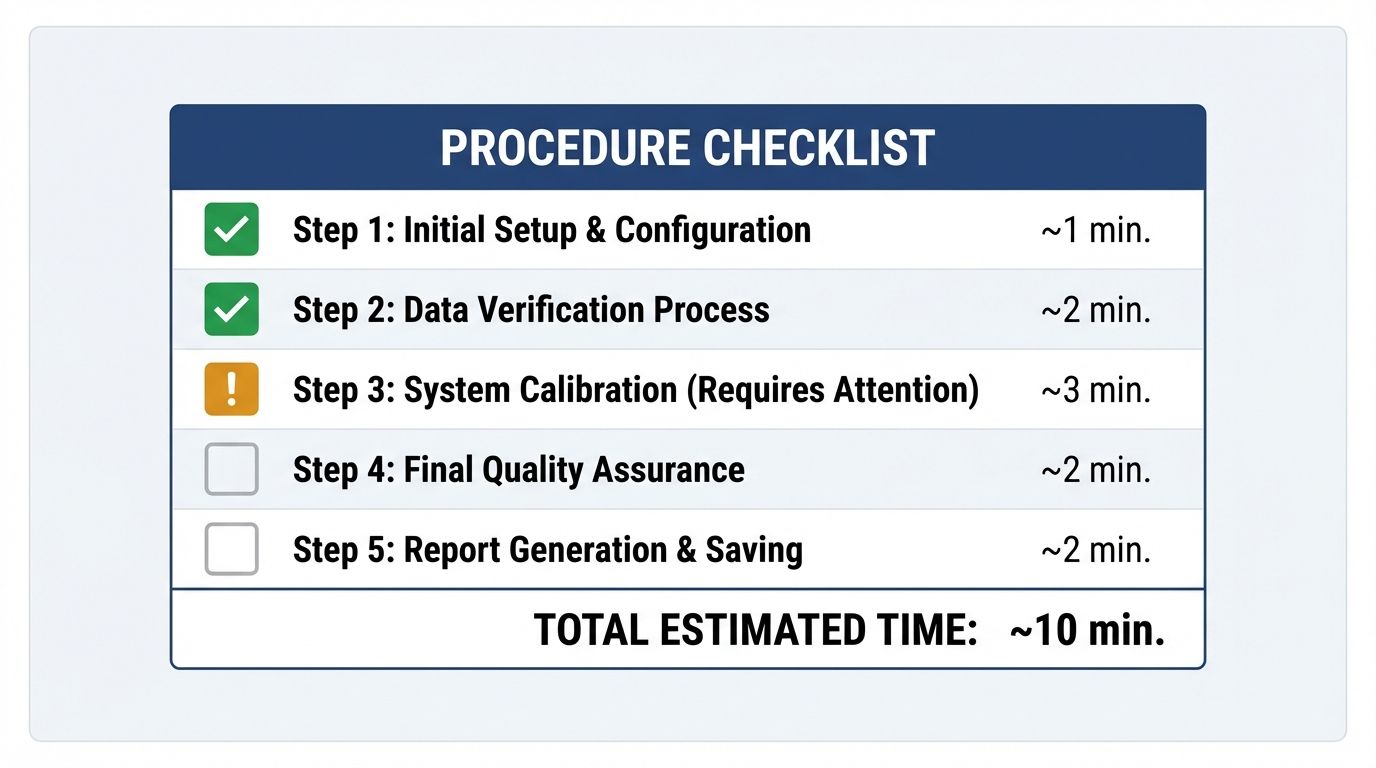

You need a browser, ten minutes, and this checklist.

AI readability is not exclusively a developer problem. Marketers, content leads, and founders can identify the most common and most damaging visibility gaps without writing a single line of code. The fixes may require developer time, but finding the problems does not. And knowing exactly what is broken — and why — is what turns a vague "we should do something about AI search" conversation into a specific, scoped work ticket.

Here is the complete 10-minute audit you can run on any page, right now.

Step 1: The Raw Source Check (2 minutes)

Open the page you want to audit in Chrome. Press Ctrl+U on Windows or Cmd+Option+U on Mac. This opens the raw HTML source — not the browser's rendered view, the actual code the server sent before any JavaScript ran.

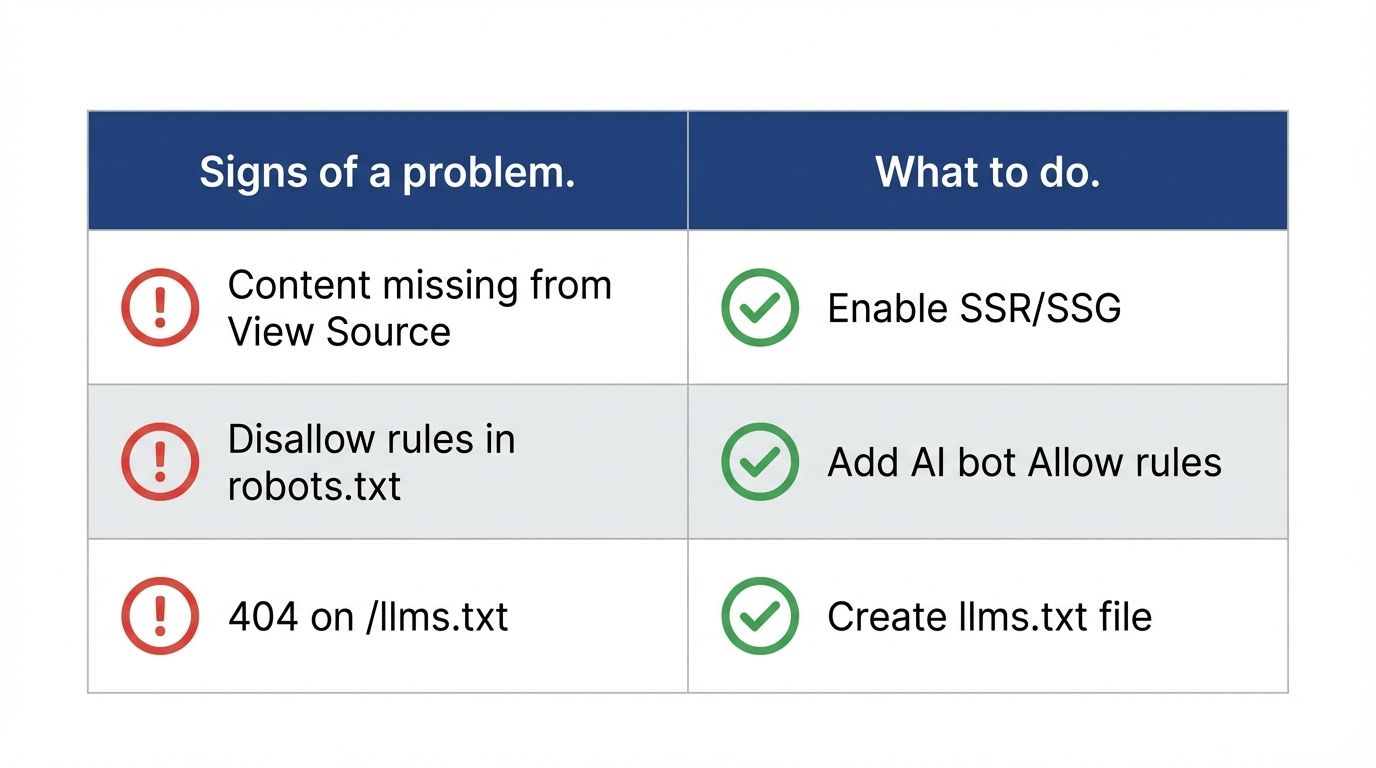

Press Ctrl+F and search for the first sentence of your main body copy. If you find it: your content is server-rendered. Good.

If you cannot find it: your content is client-side rendered and invisible to most AI crawlers. This is the most common and most damaging AEO failure, and it explains more AI invisibility than any other single issue. Every other step in this audit assumes your content is actually reachable — if this check fails, that is your entire sprint right there.

What to do: Flag this for your developer with the exact label: "We need SSR (Server-Side Rendering) enabled so our content appears in the initial HTML response, not just after JavaScript loads." That sentence is enough context for any developer to understand the fix.

Step 2: The Robots.txt Check (1 minute)

In your browser's address bar, type your domain followed by /robots.txt and press Enter.

You will see a plain text file. Look specifically for any line that says Disallow: / applied to User-agent: *. If that line exists without a corresponding Allow rule for major AI crawlers, you may be blocking the entire AI web from accessing your site.

Also check: is GPTBot mentioned anywhere? If not, your robots.txt predates the modern AI crawler era and has never been reviewed for AI access policy.

What to do: Send the file to your developer and ask them to: (1) check whether any blanket disallow rules are blocking AI crawlers, and (2) add explicit Allow: / rules for GPTBot, PerplexityBot, and ClaudeBot if they are not present. This is a plain text file change — it typically takes under 30 minutes.

Step 3: The llms.txt Check (30 seconds)

Navigate to your domain followed by /llms.txt. If you get a 404 error page: you do not have a llms.txt file. This is the fastest-to-implement, lowest-competition AI visibility signal available right now — only 0.2% of sites in our 1,500-site study had one.

If you get a page with text content: you have one. Read the first few lines — does it describe your site accurately? Does it link to your most important pages?

What to do: If it does not exist, ask your developer to create a markdown text file at the root of your domain. The minimum viable version is five lines: your site name, a one-sentence description, and two or three links to your most important pages with brief annotations. Full details are in our llms.txt guide. Setup time: under an hour.

Step 4: The Schema Check (3 minutes)

Go to search.google.com/test/rich-results and paste in the URL of your homepage, your main product or service page, and one recent blog post.

For each URL, the tool will tell you what structured data it found (if any) and whether it is valid. You are looking for: does any schema exist? Is it valid? Does it use specific types (Article, Product, SoftwareApplication, Service) or just a generic WebPage?

If the tool returns "No items detected": your page has no structured data. AI systems reading this page have to infer everything about your brand from raw text — and inference produces hallucinations.

What to do: Flag the pages that returned "No items detected" and specify the schema type that fits: SoftwareApplication for a product page, TechArticle for a technical blog post, Service for a services page. Share our scoring signals guide with your developer as a reference for which properties to populate.

Step 5: The Full Audit (3 minutes)

Go to websiteaiscore.com and submit your URL.

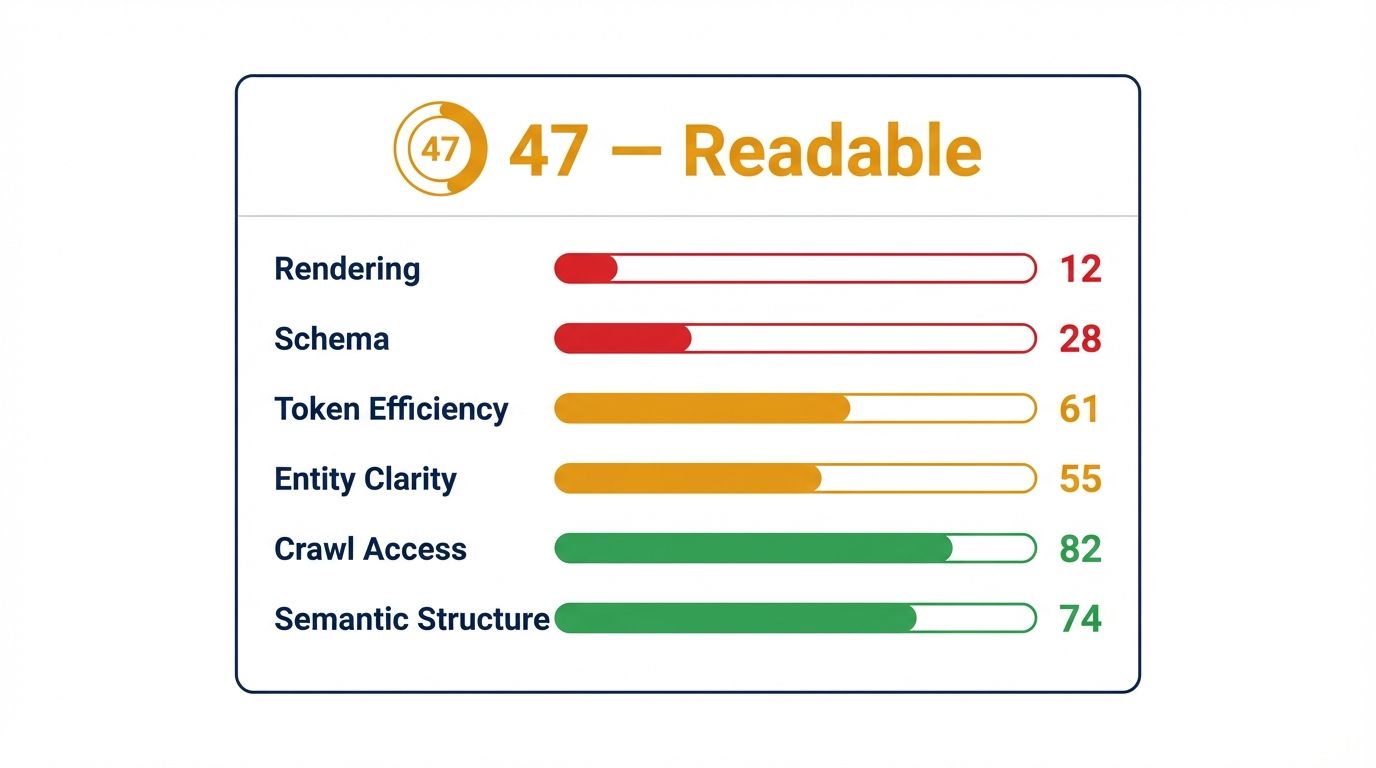

The engine runs all six signal checks automatically — rendering, schema, token efficiency, entity clarity, crawl access, and semantic structure — and returns a score with a prioritized breakdown of every issue found. Each issue comes with a plain-English explanation and a recommended fix.

This step consolidates everything you found in Steps 1 through 4 and surfaces issues those manual checks would miss — token efficiency ratio, entity disambiguation, heading hierarchy analysis, and the 100-Token Rule check are not things you can evaluate by eye.

What to do with the results: Take the priority fix queue from the audit report and turn each item into a work ticket with the audit explanation copied directly into the description. The audit report is written to be developer-readable. You do not need to translate it.

What Happens After the Audit

The audit gives you a baseline. The baseline gives you a priority stack. The priority stack gives your developer a sprint.

After each fix is deployed, re-submit the URL. The score either moves or it does not. If it moves in the signal you targeted: the fix landed. If it does not: something in the implementation did not work as expected, and you have a diagnostic rather than a mystery.

This loop — audit, fix, re-audit, confirm — is the entire AEO optimization process condensed into a repeatable cycle. The first run through the loop is always the highest-value one, because the lowest-hanging structural failures produce the biggest score deltas.

For a site starting at a score of 38, as in our recent case study, the first sprint alone moved the score to 91. Most of the gain came from three targeted fixes completed in under 14 hours of developer time.

The audit is free. The window is open. Run it now.

References

- Website AI Score: Free AI readability audit engine. https://websiteaiscore.com

- Google Rich Results Test: Schema validation tool. https://search.google.com/test/rich-results

- llms.txt Standard: Implementation specification. https://llmstxt.org

- OpenAI GPTBot: Robots.txt guidance and user-agent documentation. https://platform.openai.com/docs/gptbot

- Website AI Score Case Study: Score 38 to 91 — full audit walkthrough. https://websiteaiscore.com/blog/saas-landing-page-aeo-audit-score-38-to-91